Benchmarking Culture

A summary of my presentation at the Cultural AI Conference

What’s been clear so far about this conference on Cultural AI is the organizers were interested in a broadly construed definition of AI and Culture. That works for me, as my talk ended up being about two ways of construing the culture of benchmarking. Here’s a summarized version of what I said.

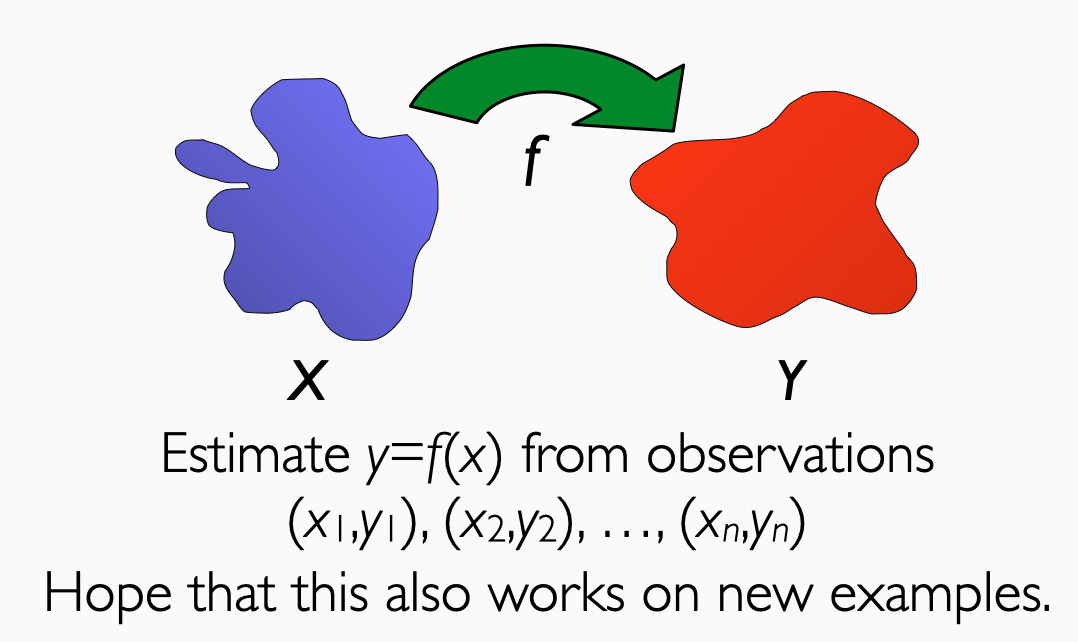

I’ve been a machine learning researcher for nearly 25 years now (yikes), and I opened with a slide describing machine learning that I originally made in 2003.

It still works. Machine learning is prediction from examples, and that’s it. You have some blob of stuff that you call X’s. You have some blob of stuff you call Y’s, and you build computer programs to predict the Y’s from the X’s. The key thing that makes machine learning algorithms different than other kinds of predictions is that you deliberately try to bake in as few assumptions as possible, other than the fact that you have examples.

I find the online discourse castigating those who say “LLMs are just next token predictors” beyond annoying. They are just next token predictors. And that’s fascinating.1

The fascinating part comes in convincing yourself that your function works on new examples. How do you do that? Anybody who has read David Hume knows you can’t do it with formal proof. We convince ourselves through a particular system of evaluation. And then we built an entire engineering discipline on top of this.

Now, what is evaluation? In our 2025 course, Deb Raji and I adapted Peter Rossi’s definition, which he developed for social scientific program evaluation.

Evaluation is measuring the difference between articulated expectations of a system and its actual performance.

This definition seems reasonable enough, but in a world obsessed with quantification, this sets into motion an inevitable bureaucratic collapse. If you want to make your evaluation legible and fair to all stakeholders, you must make it quantitative. If you want to handle a diversity of contexts, you must evaluate on multiple instantiations and report the average behavior. Quantification has to become statistical. And once you declare your expectations and metrics, everything becomes optimization. Evaluation inevitably becomes statistical prediction.

This bureaucratic loop swallows up not just social scientific program evaluation but engineering evaluation more broadly. If you are calculating mean-square errors, you’re shoehorning your evaluation into statistical prediction. Everyone loves to lean on the artifice of clean statistical facts. Once you have set this stage, machine learning is practically optimal by definition.

Machine learning as a discipline has no foundation beyond evaluation. This is a descriptive, not normative statement. The most successful machine learning papers work like this: I say that Y is predictable from X by Method M, and you should be impressed. I then make billions of dollars in a startup. Maybe I have to tell a story about how Method M relates to the brain or mean-field approximations in statistical physics. Fantastic stories don’t seem to hurt.

Now, here’s an invalid AI paper, which a lot of critics like to write: “Y is not predictable from X.” It is impossible to refute this claim. You can’t even refute it for simple methods, because what’s gonna happen is some high school kid is gonna go and change the rules slightly and prove you wrong. Then he will dunk on you on Twitter, gleefully writing “skill issue.”

The logical reconstruction makes the logical positivists roll over in their graves. The field is fueled by pure induction. We progress research programs by demonstration alone. And the way we convince others that our demos are cool is by sharing data and code.

Core to machine learning is the culture of data sets. I’m not sure if some poor soul is still trying to update this wikipedia page, but the field thrives on shared data with common tasks. The data sets give you an easy path to impress your colleagues. You can argue about the novelty of your method M, which achieves high accuracy on a dataset that others agree is challenging.

People have turned datasets into literal competitions, starting with the Netflix Prize, moving to the ImageNet Large Scale Visual Recognition Challenge (ILSVRC), and ending with a company that hosts hundreds of competitions. Not to get all monocausal on you, but the ImageNet Challenge is why we use neural networks today instead of other methods. More fascinating is that we declared protein folding solved because Alphafold did really well on a machine learning competition. Competitive testing on benchmarks can produce Nobel Prizes.

You might be put off by how everything in machine learning becomes an optimization competition. But let’s applaud machine learning for its brutal, ingenuous honesty about how researchers are driven by ruthless competition. If you want to read more on how this works in practice, read Dave Donoho’s construction of frictionless reproducibility, my concurrence and analysis of the mechanism and costs, the history Moritz Hardt and I lay out in Patterns, Predictions, and Actions, or how it fits into the bigger story of The Irrational Decision.2

Perhaps the weirdest transition of the last decade was a move from dataset evaluation to “generalist” evaluation. Tracking the GPT series of papers is instructive. GPT2 declared language models to be general-purpose predictors, but OpenAI made their case with rather standard evaluations and metrics. GPT3 moved on to harder to pin down evaluations in “natural language inference,” but there were still tables with numbers and scores. With GPT4, we didn’t even get a paper. We got a press release formatted like a paper, which is fascinating in and of itself.3 That press release bragged about the LLM’s answers on standardized tests.

Of course, this got people excited, resulting in breathless press coverage and declarations of the end of education and white-collar work. Hypecycles are part of culture, too. Part of the goal of predicting Y from X is impressing people, and the results were very impressive. Based on the reaction, you can’t say that GPT4 didn’t surpass people’s expectations.

Now, if you live by the evaluation, you die by the evaluation. Notably, Facebook nuked their AI division after flopping on GPT4-style evaluation. Not only did its userbase think the model sucked, but they were caught cheating on their evaluations, too. In a flailing attempt to recover, Facebook went out and spent $14 billion to buy some random AI talent willing to report to King Z, and they’ve thrown orders of magnitude more at their subsequent AI investments. And what did this buy them? Literally Vibes.

We’re in this fascinating world now where research artifacts are consumer products, and the evaluation is necessarily cultural. Nonprofits funded by the same dirty money that funds AI companies might argue that they can measure the power of coding agents with objective statistical evaluations of yore. But agents are evaluated by coders’ experiences and managers’ fever dreams. I wrote this a year ago, and it remains true today: Generative AI lives in the weird liminal space between productivity software and science fiction revolution. The future of generative AI will be evaluated with our wallets. No leaderboard will help us do that.

It should go without saying that we interface with via most corporate APIs is much more than LLMs now. That’s a topic for another day.

Out today! w00t.

Culture!

If the hard currency of evaluation is, well hard currency, then I see an interesting feedback loop arising.

I got your book today :)