The Rationality of the Language Machines

Are LLMs mathematically rational?

My least favorite part of writing books is their permanence. Minutes after I spend time with a hardcopy, I start seeing a bunch of things I should have added, removed, or modified. I’m not talking about typos—I expect and accept those will be abundant—but rather ways I’d have written the book differently if I could do it again. In particular, this last week of Irrational Decision events crystallized a few things that I wish I had commented on, but maybe couldn’t have.

I’ll spend the next few blog posts going over the questions raised that I wish I had addressed in the book. I was unhappy with my extemporaneous replies, and I’m hoping blogging might bring me more satisfactory answers.

I’ll start with the trillion-dollar elephant in the room: language machines. In our conversation on Tuesday, Lily Hu asked me how LLMs denote a shift away from the mathematical rationality of The Irrational Decision. For a long time, artificial intelligence systems were built by solving problems differently from how people solved them. For example, computers are better at chess than humans, but they do not approximate the way master chess players play. But LLMs, especially the chatbot interaction model that captured global attention and rocketed the valuations of these companies into the stratosphere, uncannily seem to act and respond like us. This seems like a departure from the cold rationality of computation.

The chat interface leans on the ambiguity of language to make for a satisfying interactive experience. Joseph Weizenbaum famously lamented that when his colleagues tried to make a computer appear intelligent, they leaned on psychological parlor tricks. A machine that summarizes in iambic pentameter appears intelligent. A machine that solves a linear program in millions of variables is merely mechanically calculating.

So what does it mean to lean on language machines for decision making? This is a tricky question because LLMs are unfathomably complex software artifacts. If you use them under the harness of a coding agent, you can get them to optimize all sorts of things. For example, they are incredible at making code faster, outperforming any autotuning tool I’ve ever tried. You can easily get them to implement a Bayesian rational choice engine for you.

Certainly, the rationalist weirdos in charge at Anthropic have demanded that Claude be trained to recite back summaries of dogma from the lesswrong.com comments section. So LLMs can and will parrot back the tenets of mathematical rationality to you. But natural language is not rational language. Indeed, the language of mathematical rationality is a Bayesian language game, always working to box out the unmeasurable and unquantifiable. It demands language without ambiguity, but of course, language is always ambiguous, fluid, and evolving.

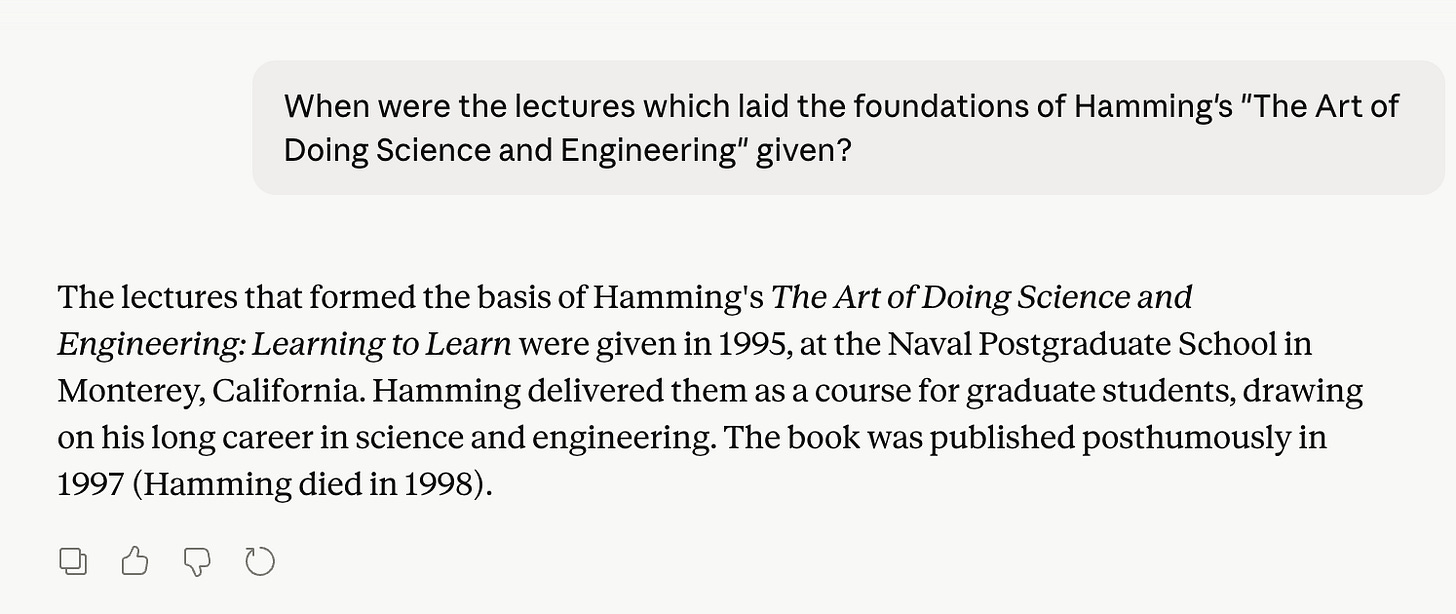

You can try to squeeze out the ambiguity by prompting the machines to reply in logically structured language, like software or mathematics. Yet, even when you try to get the chat interfaces to give you rational answers, you still find perplexing mistakes that aren’t even logical, let alone rational. I liked this glaringly obvious one from last week.

These sorts of errors remain vexingly common, but are becoming increasingly difficult to spot.

The coding machines I mentioned above, with their ability to execute, check errors, and optimize, feel like they are more rational. However, they force programmers to cosplay as project managers. Software engineers have to prompt in natural language that is kind, inspiring, and encouraging. There are massively popular GitHub repos that help you find the right phrasing to make sure your eager agents have the right artificial mindset so they don’t make mistakes. This doesn’t seem very mathematically rational either.

Moreover, in the broader context of decision making, the vast majority of people do not have LLMs write optimization code to compute the decisions for them. Instead, they treat the chat like a consultant, and get interactive text back, sometimes with charts and figures for quantified comfort. Being advised by these outputs as to how to structure your life or business is closer to a magic eight ball than to a linear program. Writing in imprecise language and having a system trained to respond nicely certainly isn’t mathematical rationality. It’s what the early mathematicians tried to excise from decision making. What a weird turn.

But if we step back from the chat box, it’s not hard to place LLMs on a linear axis of mathematical rationality. The people who build these things religiously believe in mathematical rationality. They endorse the Bostrom-Russell nonsense that intelligence is attached to singular objective functions, and our robots will kill our dogs to make us coffee. Alignment researchers claim we just have to find the right objective function for post-training, and then we’ll have perfect human companions to build us a utopia of abundance.

Similarly, the guts of LLMs are the same computational pillars I describe in the book. LLMs seem like us, but they are still built upon the very unnatural model of statistically summarizing language and code via maximum likelihood estimation. People don’t do this.

Language machines run on complex software, hardware, and network infrastructure, but none of it would look alien to a computer engineer from 2006. Just like every computing system of the past two decades, they are tuned by optimizing engagement, maximizing userbase preferences in glorified A/B tests. The advances are charted by the same sort of rational, average-case benchmarking we’ve been doing in machine learning for decades. We make them better by blindly optimizing without understanding.

And let us not forget that these products were built upon fraud, theft, and rent extraction. They are enriching a small group of incredibly annoying people who claim a mantle of mathematical rationality and are promising to subjugate the rest of us in unemployment work camps. Though I started this blog lamenting what I didn’t cover in The Irrational Decision, maybe I got this one right: That we built this wildly irrational technology with mathematical rationality corroborates my argument.

Savage's shadow looms large. "Mathematically rational behavior" is a small-world notion. We live in a large world. When LLMs respond to and generate natural language, they also operate there. In our large word, "rational behavior" is still vaguely pointing at something, but the emphasis is on vaguely: it's often used to analyze whether someone is acting "mathematically rationally" in some particular conjectured projection of the large world into a particular small world, and often the choice of projection is itself contentious.

So of course LLMs aren't "mathematically rational" in the large world, since that's not even a thing. The issue is (as usual) pretending a term that makes sense in the small world carries over uncritically to the large.

One thing I've read that I find so interesting is that it is the Chinese companies that are pursuing much more industrial applications of these systems, in robotics and manufacturing, while it's the hyper capitalists who've either become obsessed with this vague notion of AGI and superintelligence to build ever more complex LLMs or pay lip service to such a goal. I can't quite articulate the thought, but for America, you'd think the actual industrial application would drive the innovation but instead we get Chat GPT 5, which makes the sort of mistake you highlight -- which seems to definitively demonstrate that LLMs are just stochastic parrots and don't understand the text they produce, any more than the image-producing systems understand the images they output.