The Poetics of Bureaucracy

Language models are a bureaucratic technology

No conference taking a broad view of contemporary culture can escape the bureaucracy sickos (laudatory). Bureaucracy, with the complex social relations it codifies and entails, is one of the most salient aspects of our culture. Bureaucracies box in massively complex bodies of information through standardization, measurement, and policies. Computers are amazing. They are also the physical embodiment of mass bureaucracy. And no computing technology is more bureaucratic than the large language model.

Several talks at the Cultural AI conference threaded together the complexities of language models and bureaucracy. Henry Farrell kicked things off with a characteristically fantastic talk, describing his evolving view of AI as cultural and social technology. He introduced the notion of “coarse graining,” a new angle he’s working on with Cosma Shalizi.

In physics, coarse graining means “averaging out” a lot of complexity to leave you with bulk behavior that describes useful things. Arguably, it’s how you go from quantum field theory to atomic theory to the ideal gas law.1 There are levels of approximations, and details are lost in the transitions between layers. However, this loss of detail is often worth it because stacking abstractions lets us think simply inside clean layers. Moreover, surfacing coarse graining helps us understand what to look for when one level of description doesn’t suffice to describe observed phenomena.

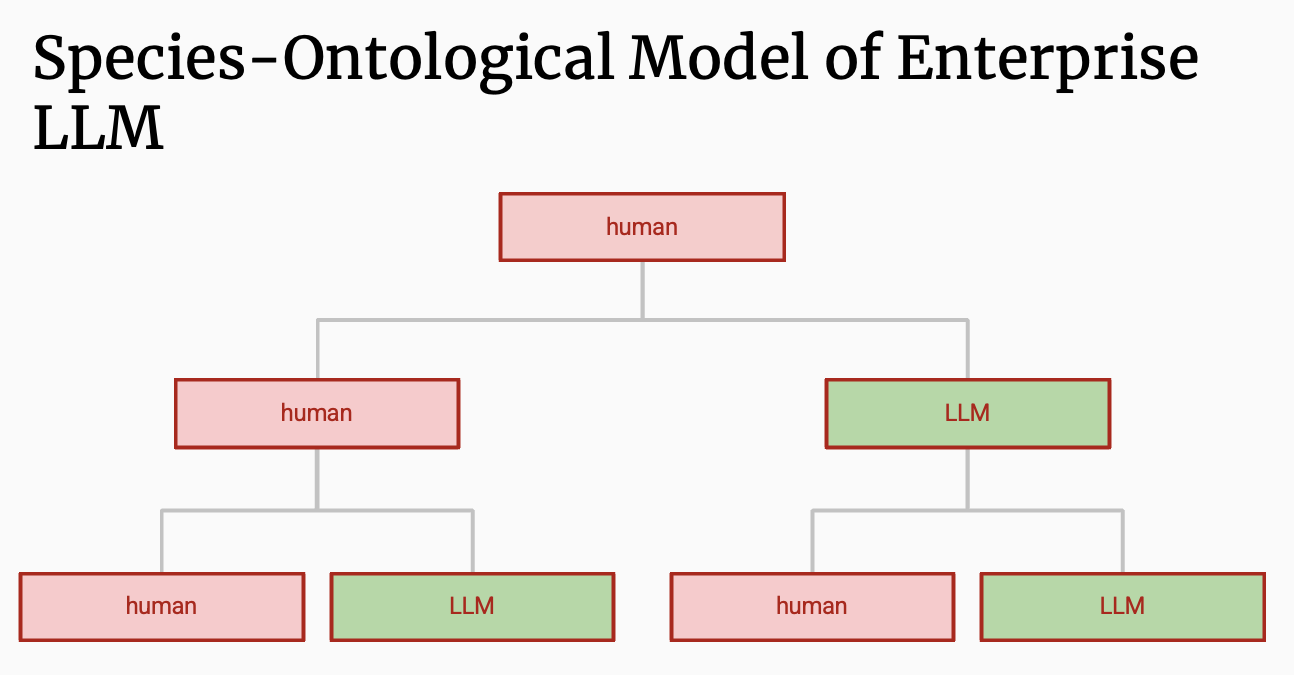

For Farrell, bureaucracies, democracies, and markets are cultural coarse grainings. Bureaucracy establishes relations between parts such that management at one particular location in an organizational web can make decisions without having to understand the fine details at all other locations. It creates a distribution of decision making, simultaneously bound and freed by rules. We can see LLMs as coarse grainings that allow us to access mediated linguistic relationships between end users and the cultural material on which they were trained.

Good bureaucracy should provide constraints that deconstrain.2 However, so often bureaucracy, in its taming of complexity, obscures sources of power in cultural relationships and the human agency behind decision making. Lily Chumley and Abbie Jacobs both spoke to different angles of this concealment.

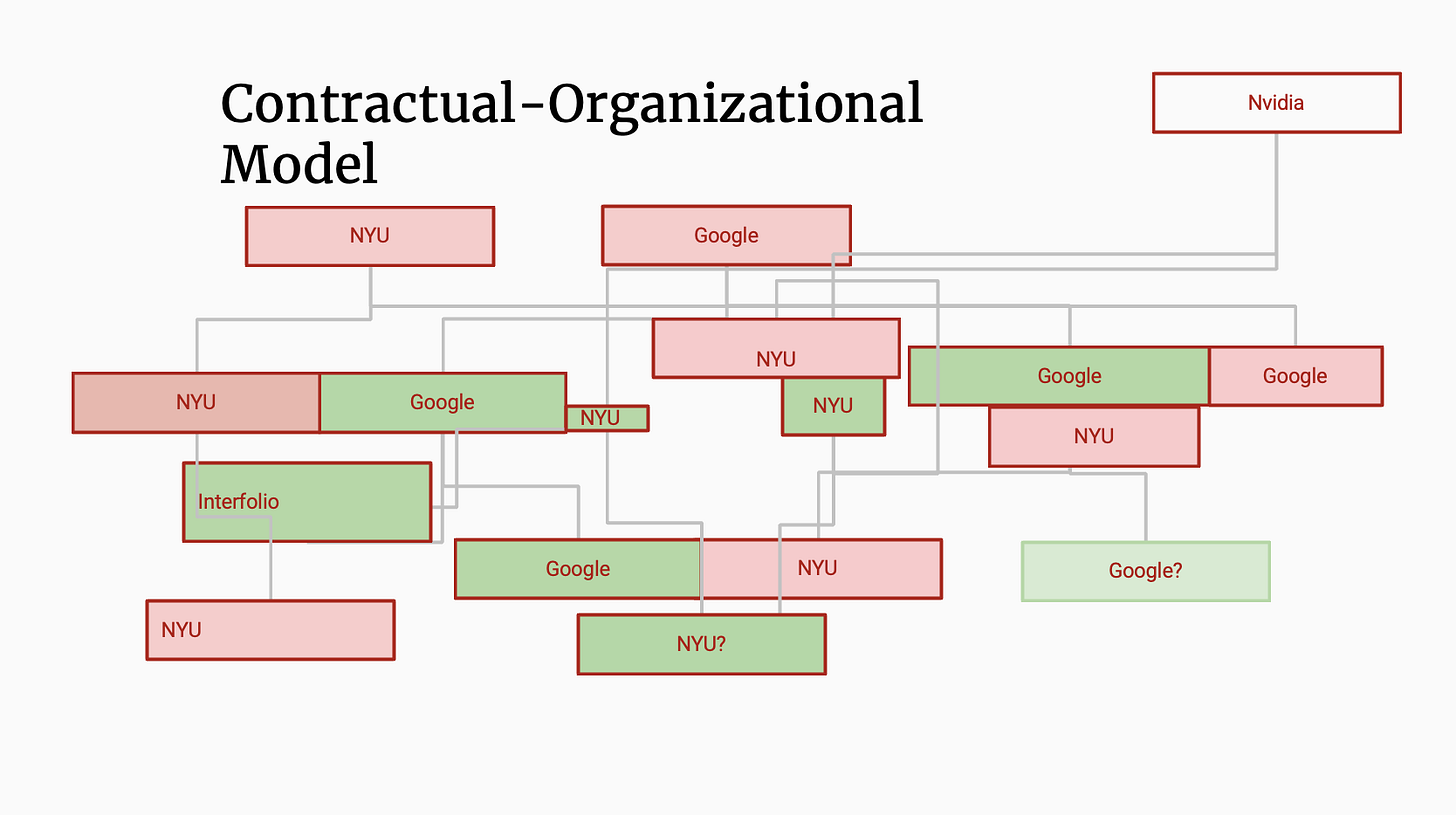

Through the lens of linguistic anthropology, Chumley described how language models obscure contractual relationships underlying enterprise software. The primary interaction with language models is through the chat box. When we squeeze our demands into prompts and skill files that use the institutional language of management, we are mimicking the casual nature of Irving Goffman’s “open-state of talk” with a computer. The interaction feels personal rather than transactional. However, your interactions with all of the work software are contingent on inscrutable vendor contracts with complex webs of accountabilities, restrictions, and obligations. The employee is left with only a chat interface that has been RLHFed into a servile caricature of a 1950s secretary. This erases the heavily surveilled, legally bound, hyper monetized relationships between corporate behemoths.

Chumley illustrated this through the SAAS web on the academic campus. Though we feel like we’re working with LLMs like they are other co-workers:

Every interaction with an LLM or web interface portal or training is mediated by a complex contract with giant corporations, be they Elsevier (who own Interfolio), Salesforce, SLATE Technolutions, Google, Microsoft, NVIDIA, OpenAI, or Anthropic. It is a move of power away from people to a fabric of capital. Gideon Lewis-Kraus commented that these power shifts from engineering to capital have been symptomatic of post-Cold War America and have had dire consequences, as in the example of Boeing.

Chumley extended her contractual analysis to the bureaucratic war machine that Kevin Baker has been so eloquently writing about. Big Tech owns AI, so this poses complex risks to the financial order as these companies are too big to fail. And yet, Big Tech is really small compared to the state. The relationships between the tech companies and the government established through military contracting are geopolitical. This means that even if we had a functioning Congress,3 the regulation of military AI would be ensnared in transnational agreements. Not only is the use of AI in warfare a smokescreen to avoid talking about the people who control decisions of violence, but it further entangles geopolitics in a big contractual mess.

From the perspective of measurement theory, Abbie Jacobs discussed how the language of governance, when coarse-grained into AI, creates new meaning. Jacobs argued that operationalizing language always in the context of governance requires conceptualizing how to measure those concepts. And this measurement and quantization are often not talked about by those doing the coding. We see this sort of talk about computing systems all the time. Words like “high-quality,” “relevant,” “toxic,” “harmful,” “age-appropriate,” “safe,” “responsible,” “fair,” “intelligence” are turned into rigid measurements by communities of coders, researchers, and policymakers. This operationalization through bureaucratic technology creates a new kind of coarse graining in which words gain meaning through their institutionalization. Arguments at this operationalized level themselves become exclusionary. Jacobs leans on measurement theory from the quantitative social sciences, arguing that “Measurement is the (usually hidden, implicit, diffuse) process through which these concepts are instantiated and made real.”

Measurement itself is governance. I associate this assertion with Theodore Porter, though he’d probably credit Horkheimer and Adorno’s Dialectic of Enlightenment. Jacobs argues that we have to bring such measurement to the surface of social technology before we go about asking our coding agents to coarse-grain it. If we can uncover the measurement process itself, then these hidden webs of governance perhaps become more legible to all of us caught in the middle. By fighting about operationalization, you are implicitly fighting about values. You are fighting about how the state sees you.

This will be my last dispatch on the Cultural AI conference for now. I don’t think I fully did justice to the speakers’ arguments or to the discussion at the conference, but the talks will be available on YouTube soon.

I’ll close with a few thoughts about “conferences” more generally. We use the same word to describe an academic gathering of ten people as fifty thousand, but those meetings couldn’t be more different. The one thing I wish we were better at was marking the proceedings of these small workshops in some non-empheral state. There is value in simply getting people in a room and then seeing influential intellectual artifacts manifest in later work. Some conversations are better when everyone knows there will be no permanent record. Not every conversation needs to become an Overleaf. Still, capturing something about the moment has value, too. I guess Max and I are blogging a bit, and that’s not nothing. There will be YouTube videos, as I have mentioned. But I’ve been thinking a lot about what it would mean to organize, archive, and coarse grain these small moments of intellectual discourse. To be continued.

Real heads know that jumping between these abstraction levels is far less cut and dried than the physicists want us to believe.

Feel free to share examples of good bureaucracy in the comments.

LOL.

I am sure that what happened in those conversations is the most interesting intellectual work going on right now when it comes to AI (at least to me and growing handful of others). That said, the value of your blogging for those who weren't there is their coarseness, which leaves a lot of room to think, as I am now, about your description of what Chumley said and why examples of good bureaucracy are key. The videos won't capture in any important sense the scene, nor is it likely that any peer-reviewed papers will do so. Informal writing of the talks and about the talks is perhaps best positioned to convey something of the moment, and of the movement of the ideas in play.

Really enjoyed these conference blogs. I think we're getting dangerously close to a ben recht blog with the phrase "always already" in it